With the November 2022 release of OpenAI’s ChatGPT, the rate of advance in AI has seemingly gone from meteoric to meteoric on steroids. But many commentators have expressed doubts that Large Language Models such as ChatGPT are truly intelligent.

What do advanced AI systems need in order to be able to think rationally? Not just give the impression that they are doing so (to a lay and impressionable observer), but for real…?

Consider the following: If all cats are crazy, and Jonathan is a cat, then Jonathan is crazy.

This is an example of deduction, one of the three primary modes of reasoning (along with induction and abduction) underlying critical thought and general problem solving. Any advanced AI system currently on the drawing board with ambitions of becoming a general problem solver, or AGI (Artificial General Intelligence), will unavoidably need to perform reliable deduction somehow, either as an explicit high-level function or as an emergent property of its low-level behaviour, and every AI researcher with an interest in AGI – or AI company with ambitions to become an AGI company – needs to know about deduction.

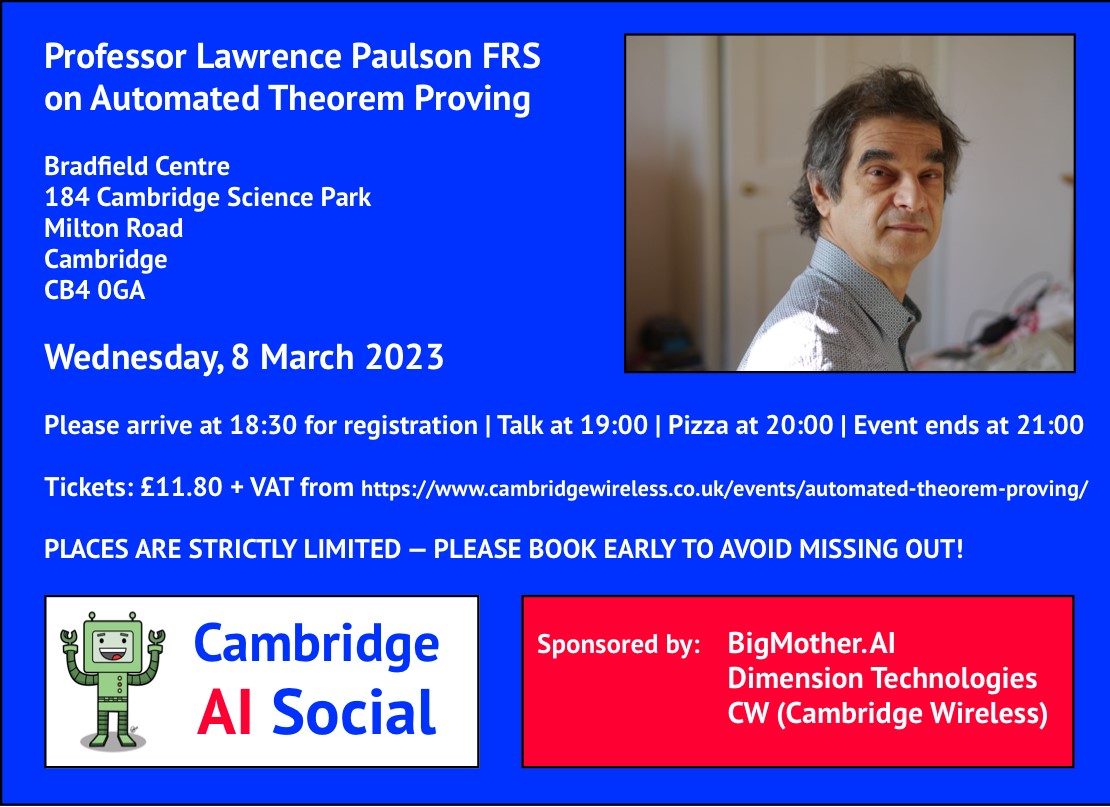

The art of getting a mindless automaton (a.k.a. computer) to perform deduction is called Automated Theorem Proving (ATP), and Professor Paulson has been a recognised world leader in ATP for over 40 years. Who better than Professor Paulson to deliver the inaugural CAIS talk at the Bradfield Centre, Cambridge, on Wednesday 8 March? No one, that’s who!

About the talk

Title: “Automated Theorem Proving: a Technology Roadmap”

Abstract: The technology of automated deduction has a long pedigree. For ordinary first-order logic, the basic techniques had all been invented by 1965: DPLL (for large Boolean problems) and the tableau and resolution calculi (for quantifiers). The relationship between automated deduction and AI has been complex: does intelligence emerge from deduction, or is it the other way around? Interactive theorem proving further complicates the picture, with a human user working in a formal calculus much stronger than first-order logic on huge, open-ended verification problems and needing maximum automation. Isabelle is an example of a sophisticated interactive prover that also relies heavily on automatic technologies through its nitpick and sledgehammer subsystems. The talk will give an architectural overview of Isabelle and its associated tools. The speaker will also speculate on how future developments, especially machine learning, could assist (not replace) the user.

Sponsors

CAIS would not be possible without the support of its sponsors: one-man AGI non-profit BigMother.AI, AI-driven-financial-forecasting startup Dimension Technologies, and (for this event) membership organisation and technology community CW (Cambridge Wireless).

Please note

- This is an in-person only event, and will not be livestreamed.

- A recording of the talk, and images of the event, will subsequently be posted online.

- In particular, CAIS may license the event video to e.g. commercial VoD channels.

- Please do not attend if you have COVID-, cold-, or flu-like symptoms.

- Places are strictly limited. Please book early to avoid missing out!

- CAIS reserve the right to refuse admission to anyone for any reason.

You can follow @CambWireless on Twitter and tweet about this event using #CWAI.