When will my Smartphone be smarter than me?

This article is from the CW Journal archive.

You are pretty smart. In fact, as you are a CWJ reader, it's reasonable to say that you are very smart. But the human race evolves only over very long timescales and the chances are that we aren't getting much smarter any time soon. Processing power, by contrast, evolves rapidly: Moore's Law may not be eternal in its original form, but nonetheless at some point the phone in your pocket will overtake the wetware in your head.

But how soon? That's the question posed by James Tagg, the former CTO of international MVNO Truphone and the inventor of the capacitive touch screen. He's been looking at the nature of intelligence through the lens of a computer scientist. He argues that while we can't see now how Moore's Law can be sustained any further, that was also true in the past and it kept on working anyway. It seems reasonable to assume that this will continue.

So we take it as given that processing power will continue to increase. The next part of the problem is actually deciding at what point a smartphone becomes smarter than us. Is the brain, as MIT supposes, a single pipeline computer which is very good at task switching? How smart are we?

|

GET CW JOURNAL ARTICLES STRAIGHT TO YOUR INBOX Subscribe now |

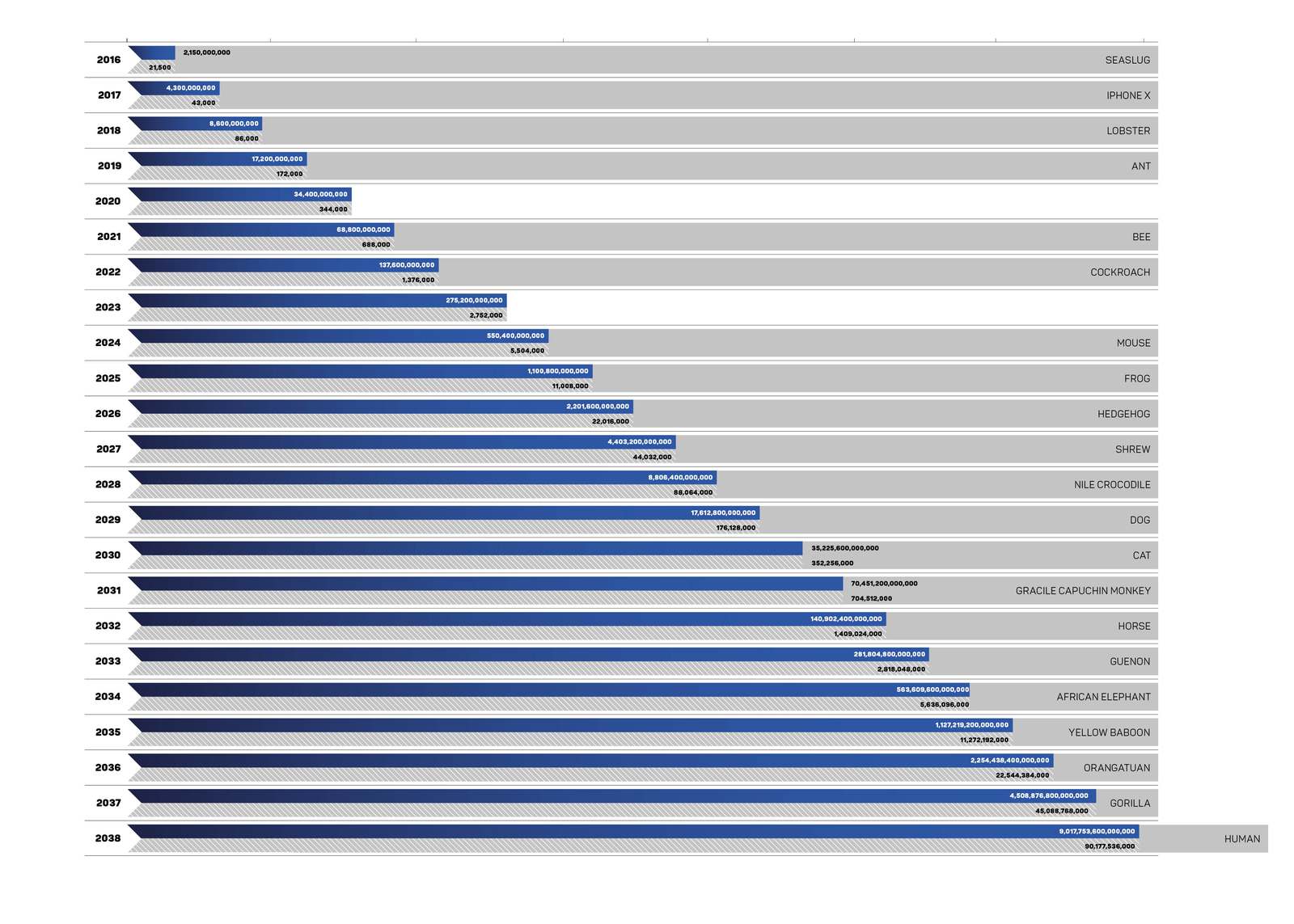

If we assume that a computer gate, which is a handful of transistors, is about as powerful as a synapse we can project the growth of processing power ahead and predict that we should see phones as smart as humans - that is, with as many gates in them as a human has brain synapses - in 2038. This does rely on the assumption that the human brain is a classical pipeline computer.

But it could be a bit more complicated than that. Tagg suggests that just as phones nowadays have many cores, people have many different centres in their brains. Some of these do light housekeeping, others heavy lifting.

One task calling for massive processing power is vision and our brain is probably at least as big a factor in what we see as our eyes are. Indeed, as Ian Hosking of the University of Cambridge engineering department is fond of saying: "believing is seeing".

Tagg explains that human sight uses two vision systems:

"One system forms a conscious model of the room. The other, a secondary system in the base of the brain, understands shape and danger."

"Playing music is another hugely brain-processor-intensive task," he adds. "Reading a score and playing music while in an fMRI scanner shows very much more of the brain working than pretty much any other task."

Another example that Tagg gives of the difference between people and current AI systems is that of a man running through an airport and wanting to shout to his phone "when's my flight?" or "I've missed my flight, what should I do next?". This is the kind of thing that a person understands and Siri, Cortana and Bixby do not. Computers can beat Grand masters at chess; computers can paint – there is an old program called AARON which seems to be truly creative, albeit with a single style. Google's Deep Mind can learn to be good at playing computer games. But computers aren't human yet.

Huawei processor manufacturer Kirin

Huawei’s processor manufacturer Kirin argues that the need for onboard AI and neural nets will become a major driver for increased processor power in phones. It’s now about a lot more than rendering the best 3D images for games. product is great!

Treating brains as traditional computers doesn't seem likely to be giving us a true picture. There are organisms which are incredibly simple but still capable of significant computing. The Paramecium is a single celled organism capable of hunting, problem solving and escaping in a way that shows learning. It's only one cell, so there is evidently some processing going on without neurons.

To model a Paramecium would require a fairly modest processor with just 100,000 gates. But remember a Paramecium is just a single cell. If one cell is 100,000 gates then we are a long way off building a processor the size of a brain, let alone a brain the size of a planet. If Moore's Law holds steady we will get there, but it might take 30 years longer than the one-synapse equals one-gate calculation would suggest: think 2080 and beyond.

Of course by finding out what's happening in the brain we might be able to develop computing techniques which will close that gap: or perhaps that's the new knowledge that will continue to power Moore's law, and it'll still be a long wait.

There may be some things going on in the brain which resemble the new kinds of computing that we are only just beginning to experiment with: quantum computing and non-causal computing. While the quantum computers we build need to be super-cooled, we've learnt that the natural world can harness quantum effects and that photosynthesis is a quantum process. Perhaps we'll learn to build computers that can do what nature does, or some of what nature does.

We might not manage to build machines that can do everything, however. There are mathematical statements of which humans can perceive the truth but which can be shown mathematically to be unsolvable by computers as we understand them. Tagg also argues that emotions like compassion are unlikely to be found in artificial intelligences.

Tagg told CWJ that his model of intelligence is two dimensional, measured on axes of raw power and creative ability. This is important in the mobile phone industry because while we need ever more power in the handset, sometimes it needs to offload some processing to the cloud. Even so, the handsets of the future may be thin but they won't be thin clients. Tagg argues that part of the reason we need more power is to be better at framing the problem which is then sent to the cloud. The chips of tomorrow will provide the processing grunt needed to feed the neural nets providing the creativity.

The race between our future phones and their probable offboard cloud friends against the apparently ever-receding target of replicating the human brain will be an interesting one to watch.

Simon Rockman bridges writing about technology and implementing it. As the editor of UK5G Innovation Briefing he visits many of the 5G applications. As Chief of Staff at Telet Research he works with a team installing 5G networks in not-spots. An experienced technology writer, he was the editor of Personal Computer World in the late 1980s and went on to found What Mobile magazine which he ran for ten years, and has reviewed over 300 handsets. As the mobile correspondent for The Register, he championed CW writing a number of articles supporting the organisation. He has also had senior roles in telecoms having been the Creative Experience Director at Motorola where he looked at new uses for mobile and Head of Requirements at Sony Ericsson where we worked on innovative devices at entry level. He was the Head of the Mobile Money Information Exchange at the GSMA and has launched Fuss Free Phones an MVNO aimed at older users